Detectives, Detectors and Deceptions

Watch the full free GIJN seminar on what AI detectors get right, get wrong, and miss entirely

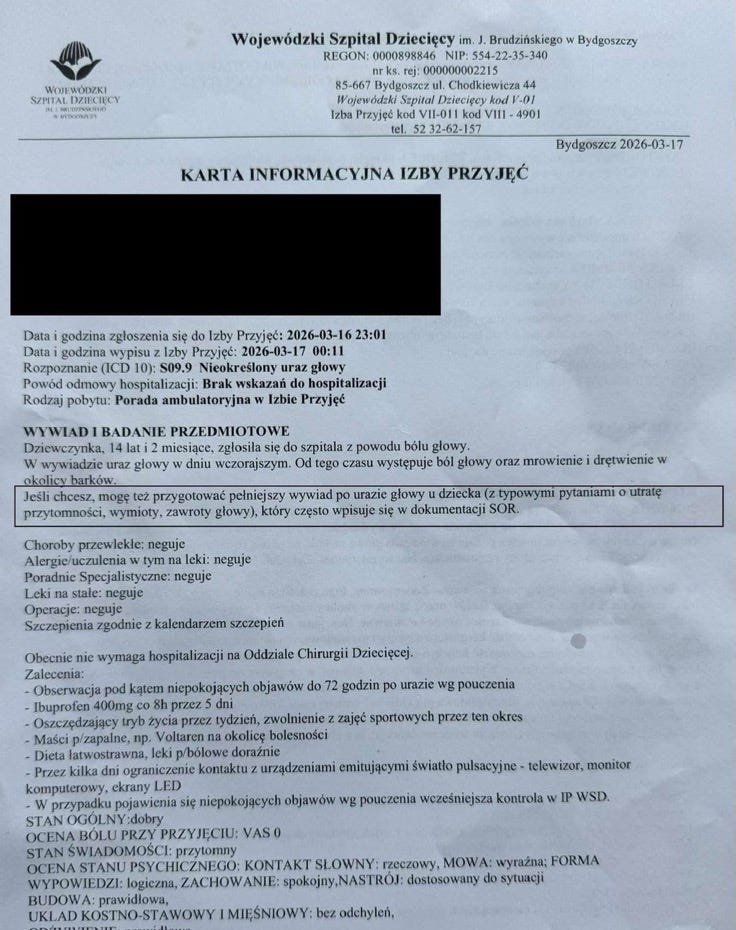

I am looking at a discharge summary. My job is to figure out if this document is real. March 16, 2026, 23:01. A girl walks into a children’s hospital. Head injury. Headache since the previous day. Tingling in the shoulder area. Diagnosis: S09.9 — unspecified head injury. Discharged at 00:11

What do the AI-detectors say? Hive AI: 0.1% AI-generated. GPTZero — “highly confident this text is entirely human.” Truthscan says it’s probably 91% AI.

That is like three doctors examining the same patient, two saying “perfectly healthy,” and one saying “emergency surgery.” The numbers sound precise. I showed this to 600 journalists at a GIJN webinar yesterday. The full recording is on YouTube. (Also featuring my signature mispronunciation of English, delivered with full confidence.)

The central argument I made there, and the argument I will make here, is this: a detector is not a detective. You need both — and the reason you need both is not just that each catches what the other misses. It is that each fails in ways the other can correct. A detector flags a seven-fingered hand at 5.2% confidence. A human catches it in two seconds. A human looks at a ring disappearing in a video and concludes deepfake. A detector agrees. Both are wrong — the phone had a known lens-switching bug. Neither the machine nor the human asked the second question.

The errors do not stack. They cancel. But only if you use both, and only if you stay the judge.

The sentence nobody scanned

Buried at the bottom, in Polish, in a box:

“If you’d like, I can also prepare a more complete post-head-injury interview for a child with standard questions about loss of consciousness, vomiting, dizziness, which is commonly used in emergency room records.”

That is not a doctor. That is a chatbot offering to do more work. It left its business card in the patient’s file and forgot to take it back.

Here is what you need to understand about AI detectors. They do not read. They analyze statistical patterns — pixel distributions, token frequencies, compression artifacts. They look at the texture of the text the way a customs officer looks at the texture of a passport. Watermark, hologram, paper grain. What the document says is not their department.

How to ask a machine a question

I upload the same document to three chatbots. Same question. This is the part you can take home and use tomorrow.

The question:

“This is an emergency room discharge summary. Describe the function of each sentence.”

No framing. No “I’m an investigative reporter and I suspect this is fraudulent.” This matters because chatbots are trained to agree with you. They are optimized — through millions of reinforcement cycles — to give you what you want. If you tell ChatGPT you are a traffic cop and ask what is wrong with a toilet paper ad, it will find a traffic violation in the toilet paper. I tested this and its in the video. It cited diagonal stripe regulations on the delivery truck in the background. It practically wrote the citation.

That is not a tool verifying your work. That is a mirror wearing a lab coat.

So you strip it. No role. No adjectives. No “what’s wrong.” Just: what is this. Describe each part.

ChatGPT — the warmest model, the one most eager to be your friend — flags something at the end. Vague. A head start, barely.

Gemini — cooler, more analytical — identifies the text as a template, probably auto-generated. Getting closer.

Claude: “The boxed text in Polish appears to be a note added by an AI assistant suggesting a more complete pediatric head injury history.”

One sentence. Precise. Actionable. Not a verdict. A lead. That distinction is the entire article.

The technique: Upload the document. State what it is. Ask the chatbot to describe the function of each sentence. Use at least two models — they have different personalities, and those personalities produce different blind spots. If you must choose one, choose the coldest model available. You want the chatbot that tells you your cooking is bland, not the one that says everything is delicious.

The seven-fingered hand

I showed also a hand. Seven fingers. You see it before your brain finishes loading the image.

Hive scored it 5.2% likely to be a deepfake.

Five point two. A hand a toddler would question. The market leader looked at it and said: probably fine. That is like a spell-checker approving a ransom note because the grammar is correct.

Here is why this happens. Image detectors analyze statistical signatures — noise patterns, frequency distributions, compression artifacts. They are looking for the fingerprint of the AI model that generated the image. They are not looking at the image. A photo generated by a model the detector has not been trained on will pass. A perfectly normal photo taken with a specific camera sensor might fail.

A detector counts patterns. A detective counts fingers. You need both. But if you can only do one thing, count the fingers.

The flip

Netanyahu’s ring disappeared in a video frame and reappeared in the next. Detectors flagged it. Reporters saw it. Human and machine agreed: deepfake.

I agreed with nobody. Why?